Multi-Agent Negotiation for Human-Centric Vehicle Configuration (Sim-DSE)

2025

Overview

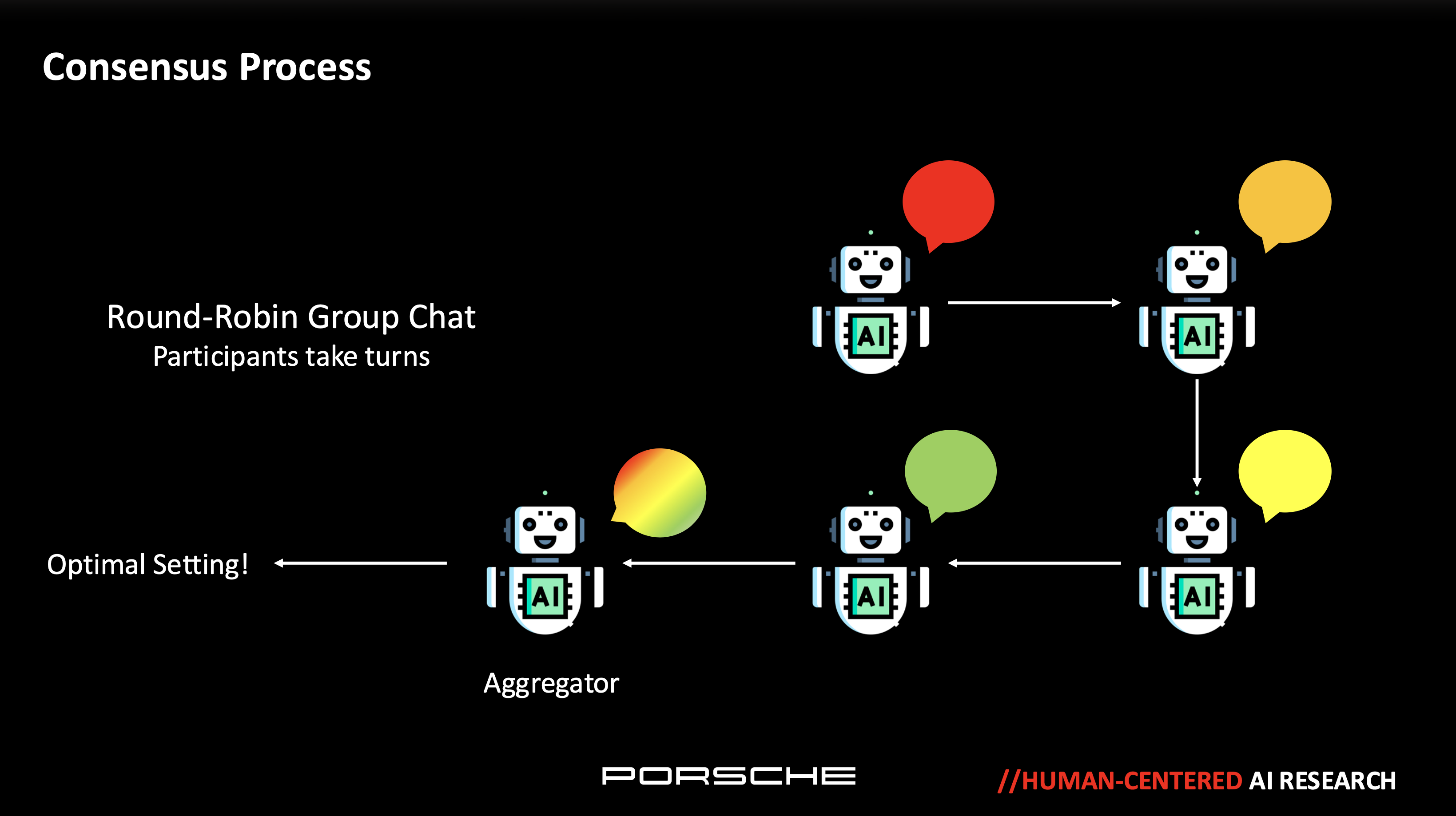

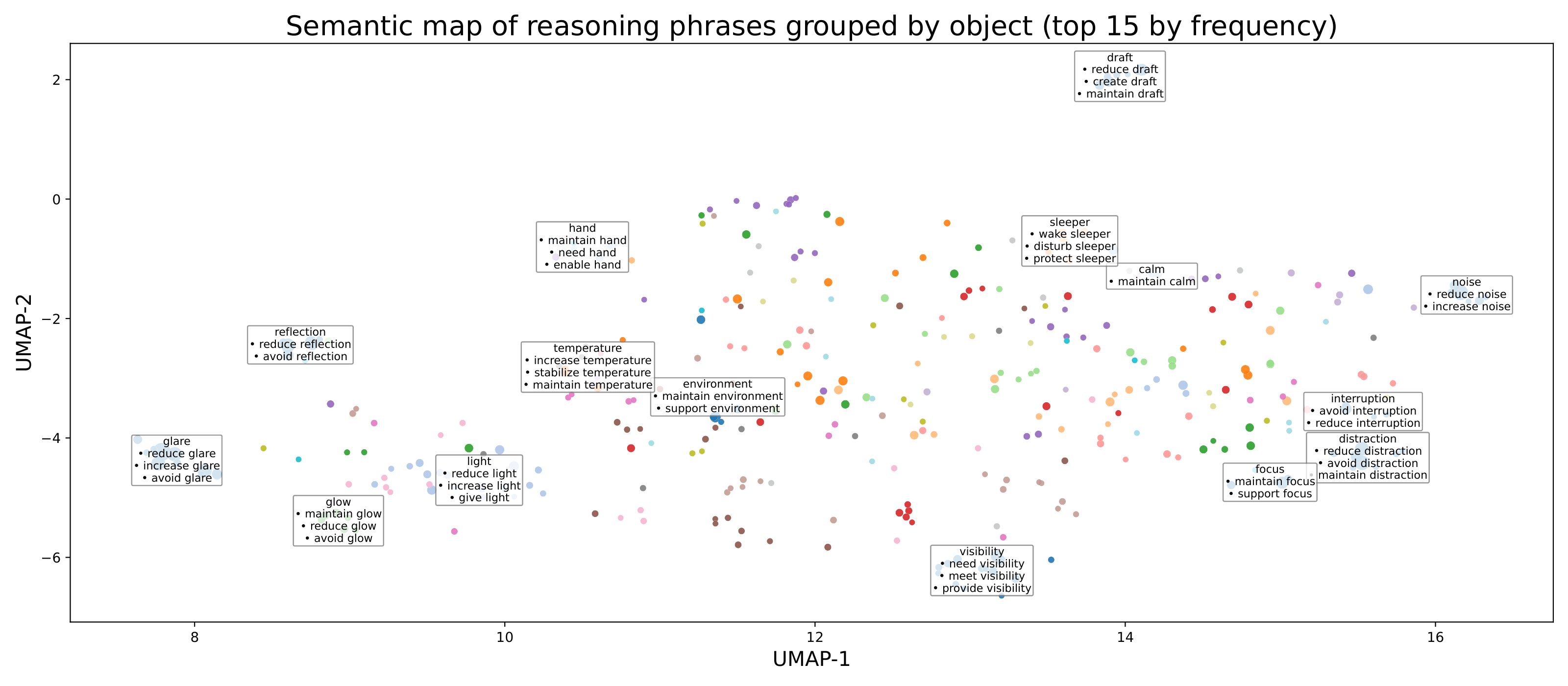

Autonomous vehicles handle the road, but not the room. When one passenger wants to sleep (dim lights, silence) while another works (bright lights, conference call), how should systems resolve passenger needs and preference? - Multi-Agent Negotiation Framework I architected a system where each occupant is represented by an AI agent that advocates for their preferences. Using Large Language Models (LLMs), these agents negotiate optimal cabin configurations through natural language reasoning, for example: "Lower volume to 15% for sleeping Passenger A, maintain ambient lighting for reading Passenger B" - Three-Axis Design Space: • User Action: Occupant behaviors (sleeping, reading, working) • System Reaction: Vehicle responses (adjust seat, modify climate) • Reasoning: AI-generated contextual justifications - Validation Strategy I conducted two-step validation: (1) simulation experiments that tested decision-making in realistic scenarios, and (2) human alignment surveys where participants judge whether the agents’ reasoning aligned with human expectations. The results indicated that the agents proposed reasonable solutions through consensus, with 96% of scenarios accepted by the participants. - Validated Rationale Analysis I developed an NLP pipeline to analyze rationale that users agreed on. I parsed responses into context, setting, and rationale, extracted normalized verb-object phrases from rationales, and clustered them semantically using sentence embeddings and BERTopic. By weighting frequent phrases and filtering generic preference language, I identified the underlying contextual factors (Figure 2). - Contextual Inquiry (Field Test) For real-world, field-test oriented application, I trained a compact in-car decision model in three stages. First, I performed supervised fine-tuning on scenarios that participants accepted (“Yes”) to teach the model to generate structured cabin settings with clear rationales. Next, I applied a Chain-of-Hindsight–style revision step using disagreed (“No”) feedback to learn targeted corrections, and finally used KTO preference alignment on balanced Yes/No labels to shift the model toward outputs that match human acceptability. To evaluate performance, I conducted a field test with interaction design experts at Porsche.

Project Gallery